Mandelbrots Cluster

Posted: January 8, 2022

Introduction

In order to learn creating clusters with Kubernetes, I had a couple fun ideas for projects to mess with. The first was Cloudtari which was completed over the summer 2021 and this is the second. The idea is to create a cluster of containers that compute frames of Mandelbrots and create a video out of it. This project is, in a way, an extension of the parallel computing Mandelbrots project, but since it needed a longer explanation it seemed better to give it its own page.

Below is a video of the final animation along with a longer description of how this was done, including some common Kubernetes commands used to build this project and source code. A lot of this page is mostly notes for me for the next time I use Kubernetes, but hopefully someone else could find it useful too.

Video

Above is a video of all 4668 frames playing at 60 frames per second. The music is a song I wrote for the Amiga Java demo from a couple years ago.

YouTube: https://www.youtube.com/watch?v=qPHeCIEUwjw

Above the second video (described at the bottom of the page) with code written in ARM64 assembly using vector instructions. This one is using hardware 32 bit floats instead of the more accurate BigFloat code.

YouTube: https://youtu.be/ik6zo5phoz8

Related Projects @mikekohn.net

| Mandelbrots: | Mandelbrots SIMD, Mandelbrot Cluster, Mandelbrot MSP430 |

Source Code

https://github.com/mikeakohn/mandelbrot_cluster

Before the Pi's

The project began with the creation of BigFixed.h, which is a C++ class for doing math on some 256 fixed point numbers. There are probably some libraries somewhere that can do this faster and such, but I learn a lot more doing things myself. The mandelbrot.cxx code can generate images based on either floating point values or a 256 binary of a BigFloat as hex digits. In the src directory is a file create_coordinates.cxx which was used to generate all the coordinates in the video. This program creates a coordinates.txt file which is used later on the web server by load_db.py to create and load a sqlite3 database.

Setup

Each Raspberry Pi 4 is running 64 bit Ubuntu 20.04. Since each RPI4 has four cores, the idea is to always have 16 pods (16 containers) running at the same time, 1 running pod per core. The Pi's are stacked in a GeekPi cluster case with a fan in the back to keep them cool. Each Pi has microk8s Kubernetes, Docker, and nginx web server installed on them. To install microk8s (or uninstall it as I had to do at some point to clean up some problems as explained later) I did:

sudo snap install microk8s --classic

sudo snap remove microk8s

Adding the login username to the microk8s group in /etc/group was also helpful so I didn't keep having to type sudo to run it. The Pi's are all 4GB models but different revisions:

kubernetes-0: Raspberry Pi 4 Model B Rev 1.1

kubernetes-1: Raspberry Pi 4 Model B Rev 1.2

kubernetes-2: Raspberry Pi 4 Model B Rev 1.4

kubernetes-3: Raspberry Pi 4 Model B Rev 1.4

One thing I did forget to do was to add some compiler flags to try to speed up the code. From what I remember from playing with this earlier that didn't seem to make any noticable difference anyway.

Web Server

The webserver consisted of two PHP scripts running on nginx with a sqlite3 database to keep track of each frame's coordinates, completion status, time started, time finished, and IP address of the node that worked on that frame. This gives information on how long each frame took to generate and can help show how well each frame was distributed in the cluster. Other than adding PHP/sqlite3, I had to make one change to nginx's config (client_max_body_size 8M;) to allow uploads bigger than 1MB in size.

Docker

The Docker container is created by a Makefile in scripts/docker which creates an image using the Dockerfile there. Inside the container is worker_node.py, worker_node.sh, and the mandelbrot executable.

The worker_node.py script is the main script which will make a request to next.php from the webserver to get a coordinate to process, processes it, and uploads it back to the webserver by posting to the save_image.php script. As a part of the processing, the script will use ImageMagick to crop from a 1024x1024 image to 1024x768 and convert from bmp to jpeg. The worker_node.py script can also be run outside of the Docker container, which is how it was tested to make sure it works.

In order to make Kubernetes have access to the container, it must be added to a local registery. The code to do that is in the Makefile, but another command is needed to initialize the registery. Altogether, without the Makefile, the code to create the Docker container and push it would be:

microk8s enable registry

docker build -t mandelbrot:local .

docker image tag mandelbrot:local localhost:32000/mandelbrot:local

docker push localhost:32000/mandelbrot:local

The Cluster

The original idea was to fork out 16 pods which would continue requesting a frame to process from the webserver until the webserver replies with "empty". When I started running that I noticed that pods were not evenly distributed among the four RPI4's. My guess is because the workload on each RPI4 at start wasn't very high, so Kubernetes couldn't figure out the best system to run each pod on. My next attempt was to create the job as 4668 completions (4668 forked out pods) with parallelism set to 16 so that only 16 pods would be running at a single time. This way as each pod is started, the system with the highest available resources would get the pod. This worked great until around 4422 completions, after that Kubernetes got clogged. Badly. More on that nightmare below.

The last thing I tried was to do 94 completions with the parallelism set to 16 again. The worker_node.py script was changed to process 50 frames before quitting so 94 * 50 = 4700. This way the first few completions may be imbalanced, but after a while they should even out.

To build the cluster, microk8s was installed on the four RPI4's. To add systems to the cluster, from the first RPI4 the following is run:

microk8s.add-node --token-ttl

From the other systems, simply running the command given by add-node will add that node to the cluster. To show all nodes in the cluster:

microk8s.kubectl get nodes

Using the third cluster design (50 frames per worker, 94 completions) all frames took 170 minutes to generate.

$ microk8s.kubectl get jobs

NAME COMPLETIONS DURATION AGE

mandelbrot 94/94 170m 2d9h

Performance

The code was originally developed on an AMD Ryzen 7 3700X system. I ran some coordinates on several systems including an RPI4 with 64 bit FreeBSD, both 32 and 64 bit Linux, and an Nvidia Jetson. The 64 bit systems were clearly faster than the 32 bit, but testing between FreeBSD and the Nvidia Jetson, it was kind of hard to tell because it's possible the version of the compiler and such could make a difference. Didn't feel right posting some benchmarks for that.

Running on the Ryzen 7, at coordinates [ -0.1692 -0.1492 -1.0442 -1.0192 ] it takes about 6.3s to run while on one of the Raspberry Pi 4's it takes around 25.8s. I did try running on node 0 and 3 just to make sure the different revisions aren't faster than the other, and they seem pretty such the same.

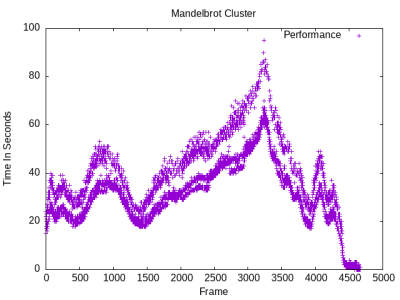

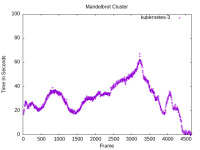

As for the time it took to compute each frame, the since the sqlite3 database on the webserver records a timestamp of when the frame was requested and when it was uploaded, I was able to create some gnuplot graphs (the scripts themselves, minus the sql queries, are in the repo). It seemed pretty interesting that there's kind of a split in the chart that kind of looks like one system was a little slower than others:

Since the database has information on which system worked on each frame, the slower host could be isolated to the controlling host that has the web server and database and such. Here are charts for each single host:

The distribution of frame processing ended up being:

kubernetes-0: 950 frames

kubernetes-1: 1242 frames

kubernetes-2: 1237 frames

kubernetes-3: 1239 frames

Issues

The biggest issue I encountered was when I set parallelism to 16 and completions to 4668 (the number of frames needing to be generated), it was working fine up until about completion 4422. At that point it seemed to just stop forking out pods:

$ microk8s.kubectl get jobs

NAME COMPLETIONS DURATION AGE

mandelbrot 4422/4668 5h14m 5h14m

At this point since no new frames generated for a couple hours or more I decided to kill the job. I was going to start it up again but I noticed that none of the pods created disappeared. I decided to try a reboot on all systems. When it came back, all the pods were still there. I tried killing pods manually, sometimes it would say they were deleted, but a lot of the time it would come back with some error message.. can't remember what exactly, but they were usually things about not being able to connect to a local socket. I tried to kick all the nodes off the cluster so I could do a reset, but a few minutes after kicking them (and verifying they are gone with kubectl get nodes) they would come back. I was finally able to tell each node to leave and do the reset, but when it came back all the pods were still there. I did a reset with a clear storage and that actually bumped it down to about 500 nodes. When I finally got it down to 0 nodes I still couldn't get Kubernetes to run a job that would fill in the last 246 frames, so I uninstalled Kubernetes and reinstalled and that took care of it.

Here are some useful commands used while fighting this issue:

microk8s.kubectl delete pod mandelbrot--1-4gql7

microk8s.kubectl delete --all pods --grace-period 0 --force

microk8s.kubectl patch pod mandelbrot-fntrq -p '{"metadata":{"finalizers":null}}'

microk8s reset --destroy-storage

After the system was stable again I had some other issues that I solved by having the worker_node.py script write to stdout and reading the logs on the pod. Also, someone at work recommended that if the above issue happens that checking all the logs (the system logs being the important ones) might help:

microk8s.kubectl logs mandelbrot--1-4gql7

microk8s.kubectl get pods --all-namespaces

Encoding Video

To put all the generated frames into an avi/mjpeg file I used libkohn_avi. To turn the mjpeg file into a reasonably sized file that could be imported into iMovie, ffmpeg was used.

./jpeg2avi out.avi /var/www/html/mandelbrot_cluster/images/frame_%05d.jpeg 60

ffmpeg -i out.avi -pix_fmt yuv420p -b:v 20M mandelbrot_cluster_no_audio.mp4

The video on YouTube lost quite a bit of detail, but still looks pretty okay.

ARM64 SIMD

Updated: May 21, 2024

I did a new version of the project using 32 bit hardware floats and ARM64 SIMD vector instructions so that 4 pixels can be computed in parallel. Realizing the instructions above weren't quite enough information to reproduce what I did easily, I wrote down the steps taken. This time ansible was used to reduce the number of times needed to log into the worker nodes.

ansible cluster -m ping -i inventory.ini

ansible clients -m ansible.builtin.shell -a 'sudo halt' -i inventory.ini

ansible clients -m ansible.builtin.shell -a 'sudo reboot' -i inventory.ini

ansible clients -m ansible.builtin.shell -a 'sudo usermod -a -G microk8s ubuntu' -i inventory.ini

The steps were basically: Install docker, install microk8s, install nginx / php / sqlite3, enable microk8s registry, build docker container, push container to microk8s, and then run the job.

The four Raspberry Pi 4's had Ubuntu Server 23.10 on them already so I did all this but I couldn't get the microk8s local registry working. With the microk8s registry disabled, it errors with connection refused, but after enabled it would just hang. nmap said port 32000 was filtered. After a bit of frustration, Ubuntu Server 24.04 was installed on 4 Pi's and after running through the install steps it just flat out worked.

The steps to set up microk8s this time were:

sudo snap install microk8s --classic

microk8s enable registry

vim /etc/docker/daemon.json (then add):

{

"insecure-registries":[ "localhost:32000" ]

}

sudo systemctl daemon-reload

sudo systemctl restart docker

cd scripts/docker_arm64/

make

make push

(install nginx, php-fpm, sqlite3, php-sqlite3, then configure)

cd scripts/html/

python3 load_db_arm64.py

cp coordinates.db /var/www/html/mandelbrot_cluster/

cp next_arm64.php /var/www/html/mandelbrot_cluster/

cp save_file.php /var/www/html/mandelbrot_cluster/

(chmod the dirs so the database can be written to)

microk8s.add-node --token-ttl=3600

(add the other nodes)

cd scripts/kubernetes_arm64/

make

Copyright 1997-2026 - Michael Kohn